+

+

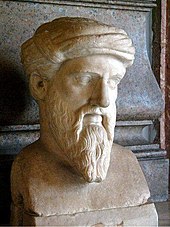

Philosophy is the study of the general and fundamental nature of reality, existence, knowledge, values, reason, mind, and language.[1][2][3] The Ancient Greek word φιλοσοφία (philosophia) was probably coined by Pythagoras[4] and literally means "love of wisdom" or "friend of wisdom".[5][6][7][8][9] Philosophy has been divided into many sub-fields. It has been divided chronologically (e.g., ancient and modern); by topic (the major topics being epistemology, logic, metaphysics, ethics, and aesthetics); and by style (e.g., analytic philosophy).

+

As a method, philosophy is often distinguished from other ways of addressing such problems by its questioning, critical, generally systematic approach and its reliance on rational argument.[10] As a noun, the term "philosophy" can refer to any body of knowledge.[11] Historically, these bodies of knowledge were commonly divided into natural philosophy, moral philosophy, and metaphysical philosophy.[9] In casual speech, the term can refer to any of "the most basic beliefs, concepts, and attitudes of an individual or group," (e.g., "Dr. Smith's philosophy of parenting").[12]

+

+

+

+

Areas of inquiry

+

Philosophy has been divided into many sub-fields. In modern universities, these sub-fields are distinguished either by chronology or topic or style.

+

+- Chronological divisions include ancient, medieval, modern, and contemporary.[13]

+- Topical divisions include epistemology, logic, metaphysics, ethics, and aesthetics.[14][15]

+- Divisions of style include analytic, continental, and social/political philosophy, among others.

+

+

Some of the major areas of study are considered individually below.

+

Epistemology

+

Main article:

Epistemology

+

Epistemology is concerned with the nature and scope of knowledge,[16] such as the relationships between truth, belief, perception and theories of justification.

+

Skepticism is the position which questions the possibility of completely justifying any truth. The regress argument, a fundamental problem in epistemology, occurs when, in order to completely prove any statement, its justification itself needs to be supported by another justification. This chain can do three possible options, all of which are unsatisfactory according to the Münchhausen trilemma. One option is infinitism, where this chain of justification can go on forever. Another option is foundationalism, where the chain of justifications eventually relies on basic beliefs or axioms that are left unproven. The last option, such as in coherentism, is making the chain circular so that a statement is included in its own chain of justification.

+

Rationalism is the emphasis on reasoning as a source of knowledge. Empiricism is the emphasis on observational evidence via sensory experience over other evidence as the source of knowledge. Rationalism claims that every possible object of knowledge can be deduced from coherent premises without observation. Empiricism claims that at least some knowledge is only a matter of observation. For this, Empiricism often cites the concept of tabula rasa, where individuals are not born with mental content and that knowledge builds from experience or perception. Epistemological solipsism is the idea that the existence of the world outside the mind is an unresolvable question.

+

+

+

+

+

+

Parmenides (fl. 500 BC) argued that it is impossible to doubt that thinking actually occurs. But thinking must have an object, therefore something beyond thinking really exists. Parmenides deduced that what really exists must have certain properties—for example, that it cannot come into existence or cease to exist, that it is a coherent whole, that it remains the same eternally (in fact, exists altogether outside time). This is known as the third man argument. Plato (427–347 BC) combined rationalism with a form of realism. The philosopher's work is to consider being, and the essence (ousia) of things. But the characteristic of essences is that they are universal. The nature of a man, a triangle, a tree, applies to all men, all triangles, all trees. Plato argued that these essences are mind-independent "forms", that humans (but particularly philosophers) can come to know by reason, and by ignoring the distractions of sense-perception.

+

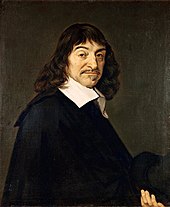

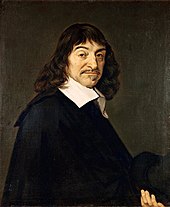

Modern rationalism begins with Descartes. Reflection on the nature of perceptual experience, as well as scientific discoveries in physiology and optics, led Descartes (and also Locke) to the view that we are directly aware of ideas, rather than objects. This view gave rise to three questions:

+

+- Is an idea a true copy of the real thing that it represents? Sensation is not a direct interaction between bodily objects and our sense, but is a physiological process involving representation (for example, an image on the retina). Locke thought that a "secondary quality" such as a sensation of green could in no way resemble the arrangement of particles in matter that go to produce this sensation, although he thought that "primary qualities" such as shape, size, number, were really in objects.

+- How can physical objects such as chairs and tables, or even physiological processes in the brain, give rise to mental items such as ideas? This is part of what became known as the mind-body problem.

+- If all the contents of awareness are ideas, how can we know that anything exists apart from ideas?

+

+

Descartes tried to address the last problem by reason. He began, echoing Parmenides, with a principle that he thought could not coherently be denied: I think, therefore I am (often given in his original Latin: Cogito ergo sum). From this principle, Descartes went on to construct a complete system of knowledge (which involves proving the existence of God, using, among other means, a version of the ontological argument).[17] His view that reason alone could yield substantial truths about reality strongly influenced those philosophers usually considered modern rationalists (such as Baruch Spinoza, Gottfried Leibniz, and Christian Wolff), while provoking criticism from other philosophers who have retrospectively come to be grouped together as empiricists.

+

Logic

+

+

Logic is the study of the principles of correct reasoning. Arguments use either deductive reasoning or inductive reasoning. Deductive reasoning is when, given certain statements (called premises), other statements (called conclusions) are unavoidably implied. Rules of inference from premises include the most popular method, modus ponens, where given “A” and “If A then B”, then “B” must be concluded. A common convention for a deductive argument is the syllogism. An argument is termed valid if its conclusion does follow from its premises, whether the premises are true or not, while an argument is sound if its conclusion follows from premises that are true. Propositional logic uses premises that are propositions, which are declarations that are either true or false, while predicate logic uses more complex premises called formulae that contain variables. These can be assigned values or can be quantified as to when they apply with the universal quantifier (always apply) or the existential quantifier (applies at least once). Inductive reasoning makes conclusions or generalizations based on probabilistic reasoning. For example, if “90% of humans are right-handed” and “Joe is human” then “Joe is probably right-handed”. Fields in logic include mathematical logic (formal symbolic logic) and philosophical logic.

+

Metaphysics

+

Main article:

Metaphysics

+

Metaphysics is the study of the most general features of reality, such as existence, time, the relationship between mind and body, objects and their properties, wholes and their parts, events, processes, and causation. Traditional branches of metaphysics include cosmology, the study of the world in its entirety, and ontology, the study of being.

+

Within metaphysics itself there are a wide range of differing philosophical theories. Idealism, for example, is the belief that reality is mentally constructed or otherwise immaterial while realism holds that reality, or at least some part of it, exists independently of the mind. Subjective idealism describes objects as no more than collections or "bundles" of sense data in the perceiver. The 18th-century philosopher George Berkeley contended that existence is fundamentally tied to perception with the phrase Esse est aut percipi aut percipere or "To be is to be perceived or to perceive".[18]

+

In addition to the aforementioned views, however, there is also an ontological dichotomy within metaphysics between the concepts of particulars and universals as well. Particulars are those objects that are said to exist in space and time, as opposed to abstract objects, such as numbers. Universals are properties held by multiple particulars, such as redness or a gender. The type of existence, if any, of universals and abstract objects is an issue of serious debate within metaphysical philosophy. Realism is the philosophical position that universals do in fact exist, while nominalism is the negation, or denial of universals, abstract objects, or both.[19] Conceptualism holds that universals exist, but only within the mind's perception.[20]

+

The question of whether or not existence is a predicate has been discussed since the Early Modern period. Essence is the set of attributes that make an object what it fundamentally is and without which it loses its identity. Essence is contrasted with accident: a property that the substance has contingently, without which the substance can still retain its identity.

+

Ethics and political philosophy

+

+

+

+

+

+

+

+

Ethics, or "moral philosophy," is concerned primarily with the question of the best way to live, and secondarily, concerning the question of whether this question can be answered. The main branches of ethics are meta-ethics, normative ethics, and applied ethics. Meta-ethics concerns the nature of ethical thought, such as the origins of the words good and bad, and origins of other comparative words of various ethical systems, whether there are absolute ethical truths, and how such truths could be known. Normative ethics are more concerned with the questions of how one ought to act, and what the right course of action is. This is where most ethical theories are generated. Lastly, applied ethics go beyond theory and step into real world ethical practice, such as questions of whether or not abortion is correct. Ethics is also associated with the idea of morality, and the two are often interchangeable.

+

+

+

+

+

+

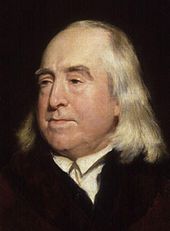

One debate that has commanded the attention of ethicists in the modern era has been between consequentialism (actions are to be morally evaluated solely by their consequences) and deontology (actions are to be morally evaluated solely by consideration of agents' duties, the rights of those whom the action concerns, or both). Jeremy Bentham and John Stuart Mill are famous for promulgating utilitarianism, which is the idea that the fundamental moral rule is to strive toward the "greatest happiness for the greatest number". However, in promoting this idea they also necessarily promoted the broader doctrine of consequentialism. Adopting a position opposed to consequentialism, Immanuel Kant argued that moral principles were simply products of reason. Kant believed that the incorporation of consequences into moral deliberation was a deep mistake, since it denies the necessity of practical maxims in governing the working of the will. According to Kant, reason requires that we conform our actions to the categorical imperative, which is an absolute duty. An important 20th-century deontologist, W.D. Ross, argued for weaker forms of duties called prima facie duties.

+

More recent works have emphasized the role of character in ethics, a movement known as the aretaic turn (that is, the turn towards virtues). One strain of this movement followed the work of Bernard Williams. Williams noted that rigid forms of consequentialism and deontology demanded that people behave impartially. This, Williams argued, requires that people abandon their personal projects, and hence their personal integrity, in order to be considered moral. Elizabeth Anscombe, in an influential paper, "Modern Moral Philosophy" (1958), revived virtue ethics as an alternative to what was seen as the entrenched positions of Kantianism and consequentialism. Aretaic perspectives have been inspired in part by research of ancient conceptions of virtue. For example, Aristotle's ethics demands that people follow the Aristotelian mean, or balance between two vices; and Confucian ethics argues that virtue consists largely in striving for harmony with other people. Virtue ethics in general has since gained many adherents, and has been defended by such philosophers as Philippa Foot, Alasdair MacIntyre, and Rosalind Hursthouse.

+

+

+

+

+

+

Political philosophy is the study of government and the relationship of individuals (or families and clans) to communities including the state. It includes questions about justice, law, property, and the rights and obligations of the citizen. Politics and ethics are traditionally inter-linked subjects, as both discuss the question of what is good and how people should live. From ancient times, and well beyond them, the roots of justification for political authority were inescapably tied to outlooks on human nature. In The Republic, Plato presented the argument that the ideal society would be run by a council of philosopher-kings, since those best at philosophy are best able to realize the good. Even Plato, however, required philosophers to make their way in the world for many years before beginning their rule at the age of fifty.

+

For Aristotle, humans are political animals (i.e. social animals), and governments are set up to pursue good for the community. Aristotle reasoned that, since the state (polis) was the highest form of community, it has the purpose of pursuing the highest good. Aristotle viewed political power as the result of natural inequalities in skill and virtue. Because of these differences, he favored an aristocracy of the able and virtuous. For Aristotle, the person cannot be complete unless he or she lives in a community. His The Nicomachean Ethics and The Politics are meant to be read in that order. The first book addresses virtues (or "excellences") in the person as a citizen; the second addresses the proper form of government to ensure that citizens will be virtuous, and therefore complete. Both books deal with the essential role of justice in civic life.

+

Nicolas of Cusa rekindled Platonic thought in the early 15th century. He promoted democracy in Medieval Europe, both in his writings and in his organization of the Council of Florence. Unlike Aristotle and the Hobbesian tradition to follow, Cusa saw human beings as equal and divine (that is, made in God's image), so democracy would be the only just form of government. Cusa's views are credited by some as sparking the Italian Renaissance, which gave rise to the notion of "Nation-States".

+

+

+

+

+

+

Later, Niccolò Machiavelli rejected the views of Aristotle and Thomas Aquinas as unrealistic. The ideal sovereign is not the embodiment of the moral virtues; rather the sovereign does whatever is successful and necessary, rather than what is morally praiseworthy. Thomas Hobbes also contested many elements of Aristotle's views. For Hobbes, human nature is essentially anti-social: people are essentially egoistic, and this egoism makes life difficult in the natural state of things. Moreover, Hobbes argued, though people may have natural inequalities, these are trivial, since no particular talents or virtues that people may have will make them safe from harm inflicted by others. For these reasons, Hobbes concluded that the state arises from a common agreement to raise the community out of the state of nature. This can only be done by the establishment of a sovereign, in which (or whom) is vested complete control over the community, and is able to inspire awe and terror in its subjects.[21]

+

+

+

+

+

+

Many in the Enlightenment were unsatisfied with existing doctrines in political philosophy, which seemed to marginalize or neglect the possibility of a democratic state. Jean-Jacques Rousseau was among those who attempted to overturn these doctrines: he responded to Hobbes by claiming that a human is by nature a kind of "noble savage", and that society and social contracts corrupt this nature. Another critic was John Locke. In Second Treatise on Government he agreed with Hobbes that the nation-state was an efficient tool for raising humanity out of a deplorable state, but he argued that the sovereign might become an abominable institution compared to the relatively benign unmodulated state of nature.[22]

+

Following the doctrine of the fact-value distinction, due in part to the influence of David Hume and his student Adam Smith, appeals to human nature for political justification were weakened. Nevertheless, many political philosophers, especially moral realists, still make use of some essential human nature as a basis for their arguments.

+

Marxism is derived from the work of Karl Marx and Friedrich Engels. Their idea that capitalism is based on exploitation of workers and causes alienation of people from their human nature, the historical materialism, their view of social classes, etc., have influenced many fields of study, such as sociology, economics, and politics. Marxism inspired the Marxist school of communism, which brought a huge impact on the history of the 20th century.

+

Aesthetics

+

+

Aesthetics deals with beauty, art, enjoyment, sensory-emotional values, perception, and matters of taste and sentiment. It is a branch of philosophy dealing with the nature of art, beauty, and taste, with the creation and appreciation of beauty.[23][24] It is more scientifically defined as the study of sensory or sensori-emotional values, sometimes called judgments of sentiment and taste.[25] More broadly, scholars in the field define aesthetics as "critical reflection on art, culture and nature."[26][27]

+

More specific aesthetic theory, often with practical implications, relating to a particular branch of the arts is divided into areas of aesthetics such as art theory, literary theory, film theory and music theory. An example from art theory is aesthetic theory as a set of principles underlying the work of a particular artist or artistic movement: such as the Cubist aesthetic.[28]

+

Specialized branches

+

+- Philosophy of history refers to the theoretical aspect of history.

+- Philosophy of language explores the nature, the origins, and the use of language.

+- Philosophy of law (often called jurisprudence) explores the varying theories explaining the nature and the interpretations of law.

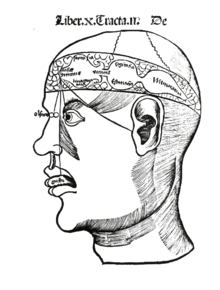

+- Philosophy of mind explores the nature of the mind, and its relationship to the body, and is typified by disputes between dualism and materialism. In recent years there has been increasing similarity between this branch of philosophy and cognitive science.

+- Philosophy of religion explores questions that often arise in connection with one or several religions, including the soul, the afterlife, God, religious experiences, analysis of religious vocabulary and texts, and the relationship of religion and science.

+- Philosophy of science explores the foundations, methods, history, implications, and purpose of science.

+- Feminist philosophy explores questions surrounding gender, sexuality, and the body including the nature of feminism itself as a social and philosophical movement.

+- Philosophy of film analyzes films and filmmakers for their philosophical content and style explores film (images, cinema, etc.) as a medium for philosophical reflection and expression.

+- Metaphilosophy explores the aims of philosophy, its boundaries, and its methods.

+

+

Many academic disciplines have also generated philosophical inquiry. These include history, logic, and mathematics.

+

History

+

+

+

+

Many societies have considered philosophical questions and built philosophical traditions based upon each other's works.

+

Eastern philosophy is organized by the chronological periods of each region. Historians of western philosophy usually divide the subject into three or more periods, the most important being ancient philosophy, medieval philosophy, and modern philosophy.[29]

+

Ancient philosophy

+

+

In Western philosophy, the spread of Christianity through the Roman Empire marked the ending of Hellenistic philosophy and ushered in the beginnings of Medieval philosophy, whereas in Eastern philosophy, the spread of Islam through the Arab Empire marked the end of Old Iranian philosophy and ushered in the beginnings of early Islamic philosophy. Genuinely philosophical thought, depending upon original individual insights, arose in many cultures roughly contemporaneously. Karl Jaspers termed the intense period of philosophical development beginning around the 7th century and concluding around the 3rd century BCE an Axial Age in human thought.

+

Egypt and Babylon

+

+

+

There are authors who date the philosophical maxims of Ptahhotep before the 25th century. For instance, Pulitzer Prize–winning historian Will Durant dates these writings as early as 2880 BCE within The Story of Civilization: Our Oriental History. Durant claims that Ptahhotep could be considered the very first philosopher in virtue of having the earliest and surviving fragments of moral philosophy (i.e., "The Maxims of Ptah-Hotep").[30] Ptahhotep's grandson, Ptahhotep Tshefi, is traditionally credited with being the author of the collection of wise sayings known as The Maxims of Ptahhotep,[31] whose opening lines attribute authorship to the vizier Ptahhotep: "Instruction of the Mayor of the city, the Vizier Ptahhotep, under the Majesty of King Isesi".

+

The origins of Babylonian philosophy can be traced back to the wisdom of early Mesopotamia, which embodied certain philosophies of life, particularly ethics, in the forms of dialectic, dialogues, epic poetry, folklore, hymns, lyrics, prose, and proverbs. The reasoning and rationality of the Babylonians developed beyond empirical observation.[32] The Babylonian text Dialog of Pessimism contains similarities to the agnostic thought of the sophists, the Heraclitean doctrine of contrasts, and the dialogues of Plato, as well as a precursor to the maieutic Socratic method of Socrates and Plato.[33] The Milesian philosopher Thales is also traditionally said to have studied philosophy in Mesopotamia.

+

Ancient Chinese

+

+

+

+

+

Confucius, illustrated in

Myths & Legends of China, 1922, by E.T.C. Werner.

+

+

+

+

Philosophy has had a tremendous effect on Chinese civilization, and throughout East Asia. The majority of Chinese philosophy originates in the Spring and Autumn and Warring States era, during a period known as the "Hundred Schools of Thought",[34] which was characterized by significant intellectual and cultural developments.[34] It was during this era that the major philosophies of China, Confucianism, Mohism, Legalism, and Taoism, arose, along with philosophies that later fell into obscurity, like Agriculturalism, Chinese Naturalism, and the Logicians. Of the many philosophical schools of China, only Confucianism and Taoism existed after the Qin Dynasty suppressed any Chinese philosophy that was opposed to Legalism.

+

Confucianism is humanistic,[35] philosophy that believes that human beings are teachable, improvable and perfectible through personal and communal endeavour especially including self-cultivation and self-creation. Confucianism focuses on the cultivation of virtue and maintenance of ethics, the most basic of which are ren, yi, and li.[36] Ren is an obligation of altruism and humaneness for other individuals within a community, yi is the upholding of righteousness and the moral disposition to do good, and li is a system of norms and propriety that determines how a person should properly act within a community.[36]

+

Taoism focuses on establishing harmony with the Tao, which is origin of and the totality of everything that exists. The word "Tao" (or "Dao", depending on the romanization scheme) is usually translated as "way", "path" or "principle". Taoist propriety and ethics emphasize the Three Jewels of the Tao: compassion, moderation, and humility, while Taoist thought generally focuses on nature, the relationship between humanity and the cosmos (天人相应); health and longevity; and wu wei, action through inaction. Harmony with the Universe, or the origin of it through the Tao, is the intended result of many Taoist rules and practices.

+

Ancient Graeco-Roman

+

+

+

+

+

+

+

Ancient Graeco-Roman philosophy is a period of Western philosophy, starting in the 6th century [c. 585] BC to the 6th century AD. It is usually divided into three periods: the pre-Socratic period, the Ancient Classical Greek period of Plato and Aristotle, and the post-Aristotelian (or Hellenistic) period. A fourth period that is sometimes added includes the Neoplatonic and Christian philosophers of Late Antiquity. The most important of the ancient philosophers (in terms of subsequent influence) are Plato and Aristotle.[37] Plato specifically, is credited as the founder of Western philosophy. The philosopher Alfred North Whitehead said of Plato: "The safest general characterization of the European philosophical tradition is that it consists of a series of footnotes to Plato. I do not mean the systematic scheme of thought which scholars have doubtfully extracted from his writings. I allude to the wealth of general ideas scattered through them."[38]

+

It was said in Roman Ancient history that Pythagoras was the first man to call himself a philosopher, or lover of wisdom,[39] and Pythagorean ideas exercised a marked influence on Plato, and through him, all of Western philosophy. Plato and Aristotle, the first Classical Greek philosophers, did refer critically to other simple "wise men", which were called in Greek "sophists," and which were common before Pythagoras' time. From their critique it appears that a distinction was then established in their own Classical period between the more elevated and pure "lovers of wisdom" (the true Philosophers), and these other earlier and more common traveling teachers, who often also earned money from their craft.

+

The main subjects of ancient philosophy are: understanding the fundamental causes and principles of the universe; explaining it in an economical way; the epistemological problem of reconciling the diversity and change of the natural universe, with the possibility of obtaining fixed and certain knowledge about it; questions about things that cannot be perceived by the senses, such as numbers, elements, universals, and gods. Socrates is said to have been the initiator of more focused study upon the human things including the analysis of patterns of reasoning and argument and the nature of the good life and the importance of understanding and knowledge in order to pursue it; the explication of the concept of justice, and its relation to various political systems.[37]

+

In this period the crucial features of the Western philosophical method were established: a critical approach to received or established views, and the appeal to reason and argumentation. This includes Socrates' dialectic method of inquiry, known as the Socratic method or method of "elenchus", which he largely applied to the examination of key moral concepts such as the Good and Justice. To solve a problem, it would be broken down into a series of questions, the answers to which gradually distill the answer a person would seek. The influence of this approach is most strongly felt today in the use of the scientific method, in which hypothesis is the first stage.

+

Ancient Indian

+

+

The term Indian philosophy (Sanskrit: Darshanas), refers to any of several schools of philosophical thought that originated in the Indian subcontinent, including Hindu philosophy, Buddhist philosophy, and Jain philosophy. Having the same or rather intertwined origins, all of these philosophies have a common underlying themes of Dharma and Karma, and similarly attempt to explain the attainment of Moksha (liberation). They have been formalized and promulgated chiefly between 1000 BC to a few centuries AD.

+

India's philosophical tradition dates back to the composition of the Upanisads[40] in the later Vedic period (c. 1000-500 BCE). Subsequent schools (Skt: Darshanas) of Indian philosophy were identified as orthodox (Skt: astika) or non-orthodox (Skt: nastika), depending on whether or not they regarded the Vedas as an infallible source of knowledge.[41] In the history of the Indian subcontinent, following the establishment of a Vedic culture, the development of philosophical and religious thought over a period of two millennia gave rise to what came to be called the six schools of astika, or orthodox, Indian or Hindu philosophy. These schools have come to be synonymous with the greater religion of Hinduism, which was a development of the early Vedic religion. Schools of Hindu philosophy are Nyaya, Vaisesika, Samkhya, Yoga, Purva mimamsa and Vedanta. Other classifications also include Pashupata, Saiva, Raseśvara and Pāṇini Darśana with the other orthodox schools.[42]

+

Jain philosophy revolves around the concept of ahimsā (non-violence). The major contribution of the Jain philosophy was the doctrine of Anekantavada (multiplicity of view points). According to the Jain epistemology, knowledge is of five kinds – sensory knowledge, scriptural knowledge, clairvoyance, telepathy, and omniscience.[43]

+

Buddhist philosophy and materialist (Cārvāka) philosophy refuted the idea of an eternal soul.

+

Competition and integration between the various schools was intense during their formative years, especially between 500 BC to 200 AD. Some like the Jain, Buddhist, Shaiva and Vedanta schools survived, while others like Samkhya and Ajivika did not, either being assimilated or going extinct. The Sanskrit term for "philosopher" is dārśanika, one who is familiar with the systems of philosophy, or darśanas.[44]

+

Ancient Persian

+

+

+

+

+

+

+

Persian philosophy can be traced back as far as Old Iranian philosophical traditions and thoughts, with their ancient Indo-Iranian roots. These were considerably influenced by Zarathustra's teachings. Throughout Iranian history and due to remarkable political and social influences such as the Macedonian, the Arab, and the Mongol invasions of Persia, a wide spectrum of schools of thought arose. These espoused a variety of views on philosophical questions, extending from Old Iranian and mainly Zoroastrianism-influenced traditions to schools appearing in the late pre-Islamic era, such as Manicheism and Mazdakism, as well as various post-Islamic schools. Iranian philosophy after Arab invasion of Persia is characterized by different interactions with the old Iranian philosophy, the Greek philosophy and with the development of Islamic philosophy. Illuminationism and the transcendent theosophy are regarded as two of the main philosophical traditions of that era in Persia. Zoroastrianism has been identified as one of the key early events in the development of philosophy.[45]

+

5th–16th centuries

+

Europe

+

Medieval

+

+

Medieval philosophy is the philosophy of Western Europe and the Middle East during the Middle Ages, roughly extending from the Christianization of the Roman Empire until the Renaissance.[46] Medieval philosophy is defined partly by the rediscovery and further development of classical Greek and Hellenistic philosophy, and partly by the need to address theological problems and to integrate the then widespread sacred doctrines of Abrahamic religion (Islam, Judaism, and Christianity) with secular learning.

+

The history of western European medieval philosophy is traditionally divided into two main periods: the period in the Latin West following the Early Middle Ages until the 12th century, when the works of Aristotle and Plato were preserved and cultivated; and the "golden age"[citation needed] of the 12th, 13th and 14th centuries in the Latin West, which witnessed the culmination of the recovery of ancient philosophy, and significant developments in the field of philosophy of religion, logic and metaphysics.

+

The medieval era was disparagingly treated by the Renaissance humanists, who saw it as a barbaric "middle" period between the classical age of Greek and Roman culture, and the "rebirth" or renaissance of classical culture. Yet this period of nearly a thousand years was the longest period of philosophical development in Europe, and possibly the richest. Jorge Gracia has argued that "in intensity, sophistication, and achievement, the philosophical flowering in the thirteenth century could be rightly said to rival the golden age of Greek philosophy in the fourth century B.C."[47]

+

Some problems discussed throughout this period are the relation of faith to reason, the existence and unity of God, the object of theology and metaphysics, the problems of knowledge, of universals, and of individuation.

+

+

+

+

+

+

Philosophers from the Middle Ages include the Christian philosophers Augustine of Hippo, Boethius, Anselm, Gilbert of Poitiers, Peter Abelard, Roger Bacon, Bonaventure, Thomas Aquinas, Duns Scotus, William of Ockham and Jean Buridan; the Jewish philosophers Maimonides and Gersonides; and the Muslim philosophers Alkindus, Alfarabi, Alhazen, Avicenna, Algazel, Avempace, Abubacer, Ibn Khaldūn, and Averroes. The medieval tradition of Scholasticism continued to flourish as late as the 17th century, in figures such as Francisco Suarez and John of St. Thomas.

+

Aquinas, the father of Thomism, was immensely influential in Catholic Europe; he placed a great emphasis on reason and argumentation, and was one of the first to use the new translation of Aristotle's metaphysical and epistemological writing. His work was a significant departure from the Neoplatonic and Augustinian thinking that had dominated much of early Scholasticism.

+

Renaissance

+

+

+

+

+

+

+

The Renaissance ("rebirth") was a period of transition between the Middle Ages and modern thought,[48] in which the recovery of classical texts helped shift philosophical interests away from technical studies in logic, metaphysics, and theology towards eclectic inquiries into morality, philology, and mysticism.[49][50] The study of the classics and the humane arts generally, such as history and literature, enjoyed a scholarly interest hitherto unknown in Christendom, a tendency referred to as humanism.[51][52] Displacing the medieval interest in metaphysics and logic, the humanists followed Petrarch in making man and his virtues the focus of philosophy.[53][54]

+

The study of classical philosophy also developed in two new ways. On the one hand, the study of Aristotle was changed through the influence of Averroism. The disagreements between these Averroist Aristotelians, and more orthodox catholic Aristotelians such as Albertus Magnus and Thomas Aquinas eventually contributed to the development of a "humanist Aristotelianism" developed in the Renaissance, as exemplified in the thought of Pietro Pomponazzi and Giacomo Zabarella. Secondly, as an alternative to Aristotle, the study of Plato and the Neoplatonists became common. This was assisted by the rediscovery of works which had not been well known previously in Western Europe. Notable Renaissance Platonists include Nicholas of Cusa, and later Marsilio Ficino and Giovanni Pico della Mirandola.[54]

+

The Renaissance also renewed interest in anti-Aristotelian theories of nature considered as an organic, living whole comprehensible independently of theology, as in the work of Nicholas of Cusa, Nicholas Copernicus, Giordano Bruno, Telesius, and Tommaso Campanella.[55] Such movements in natural philosophy dovetailed with a revival of interest in occultism, magic, hermeticism, and astrology, which were thought to yield hidden ways of knowing and mastering nature (e.g., in Marsilio Ficino and Giovanni Pico della Mirandola).[56]

+

These new movements in philosophy developed contemporaneously with larger religious and political transformations in Europe: the Reformation and the decline of feudalism. Though the theologians of the Protestant Reformation showed little direct interest in philosophy, their destruction of the traditional foundations of theological and intellectual authority harmonized with a revival of fideism and skepticism in thinkers such as Erasmus, Montaigne, and Francisco Sanches.[57][58][59] Meanwhile, the gradual centralization of political power in nation-states was echoed by the emergence of secular political philosophies, as in the works of Niccolò Machiavelli (often described as the first modern political thinker, or a key turning point towards modern political thinking[60]), Thomas More, Erasmus, Justus Lipsius, Jean Bodin, and Hugo Grotius.[61][62]

+

East Asia

+

+

Mid-Imperial Chinese philosophy is primarily defined by the development of Neo-Confucianism. During the Tang Dynasty, Buddhism from Nepal also became a prominent philosophical and religious discipline. (It should be noted that philosophy and religion were clearly distinguished in the West, whilst these concepts were more continuous in the East due to, for example, the philosophical concepts of Buddhism.)

+

Neo-Confucianism is a philosophical movement that advocated a more rationalist and secular form of Confucianism by rejecting superstitious and mystical elements of Daoism and Buddhism that had influenced Confucianism during and after the Han Dynasty.[63] Although the Neo-Confucianists were critical of Daoism and Buddhism,[64] the two did have an influence on the philosophy, and the Neo-Confucianists borrowed terms and concepts from both. However, unlike the Buddhists and Daoists, who saw metaphysics as a catalyst for spiritual development, religious enlightenment, and immortality, the Neo-Confucianists used metaphysics as a guide for developing a rationalist ethical philosophy.[65]

+

Neo-Confucianism has its origins in the Tang Dynasty; the Confucianist scholars Han Yu and Li Ao are seen as forbears of the Neo-Confucianists of the Song Dynasty.[64] The Song Dynasty philosopher Zhou Dunyi is seen as the first true "pioneer" of Neo-Confucianism, using Daoist metaphysics as a framework for his ethical philosophy.[65]

+

Elsewhere in East Asia, Japanese Philosophy began to develop as indigenous Shinto beliefs fused with Buddhism, Confucianism and other schools of Chinese philosophy and Indian philosophy. Similar to Japan, in Korean philosophy the emotional content of Shamanism was integrated into the Neo-Confucianism imported from China. Vietnamese philosophy was also influenced heavily by Confucianism in this period.[citation needed]

+

India

+

+

+

The period between 5th and 9th centuries CE was the most brilliant epoch in the development of Indian philosophy as Hindu and Buddhist philosophies flourished side by side.[66] Of these various schools of thought the non-dualistic Advaita Vedanta emerged as the most influential[67] and most dominant school of philosophy.[68] The major philosophers of this school were Gaudapada, Adi Shankara and Vidyaranya.

+

Advaita Vedanta rejects theism and dualism by insisting that “Brahman [ultimate reality] is without parts or attributes...one without a second.” Since Brahman has no properties, contains no internal diversity and is identical with the whole reality, it cannot be understood as God.[69] Brahman though being indescribable is at best described as Satchidananda (merging "Sat" + "Chit" + "Ananda", i.e., Existence, Consciousness and Bliss) by Shankara. Advaita ushered a new era in Indian philosophy and as a result, many new schools of thought arose in the medieval period. Some of them were Visishtadvaita (qualified monism), Dvaita (dualism), Dvaitadvaita (dualism-nondualism), Suddhadvaita (pure non-dualism), Achintya Bheda Abheda and Pratyabhijña (the recognitive school).

+

Middle East

+

+

In early Islamic thought, which refers to philosophy during the "Islamic Golden Age", traditionally dated between the 8th and 12th centuries, two main currents may be distinguished. The first is Kalam, that mainly dealt with Islamic theological questions. These include the Mu'tazili and Ash'ari. The other is Falsafa, that was founded on interpretations of Aristotelianism and Neoplatonism. There were attempts by later philosopher-theologians at harmonizing both trends, notably by Ibn Sina (Avicenna) who founded the school of Avicennism, Ibn Rushd (Averroës) who founded the school of Averroism, and others such as Ibn al-Haytham (Alhacen) and Abū Rayhān al-Bīrūnī.

+

Avicenna argued his "Floating Man" thought experiment concerning Self-awareness, in which a man prevented of sense experience by being blindfolded and free falling would still be aware of his existence.[70]

+

In epistemology, Ibn Tufail wrote the novel Hayy ibn Yaqdhan and in response Ibn al-Nafis wrote the novel Theologus Autodidactus. Both were concerning autodidacticism as illuminated through the life of a feral child spontaneously generated in a cave on a desert island.

+

Mesoamerica

+

+

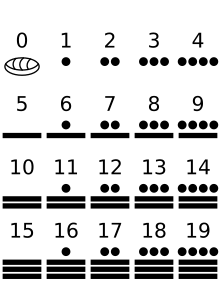

Aztec philosophy was the school of philosophy developed by the Aztec Empire. The Aztecs had a well-developed school of philosophy, perhaps the most developed in the Americas and in many ways comparable to Greek philosophy, even amassing more texts than the ancient Greeks.[71] Aztec philosophy focused on dualism, monism, and aesthetics, and Aztec philosophers attempted to answer the main Aztec philosophical question of how to gain stability and balance in an ephemeral world.

+

Aztec philosophy saw the concept of Ometeotl as a unity that underlies the universe. Ometeotl forms, shapes, and is all things. Even things in opposition—light and dark, life and death—were seen as expressions of the same unity, Ometeotl. The belief in a unity with dualistic expressions compares with similar dialectical monist ideas in both Western and Eastern philosophies.[72] Aztec priests had a panentheistic view of religion but the popular Aztec religion maintained polytheism. Priests saw the different gods as aspects of the singular and transcendent unity of teotl but the masses were allowed to practice polytheism without understanding the true, unified nature of the Aztec gods.[72]

+

Africa

+

+

Ethiopian philosophy is the philosophical corpus of the territories of present-day Ethiopia and Eritrea. Besides via oral tradition, it was preserved early on in written form through Ge'ez manuscripts. This philosophy occupies a unique position within African philosophy. The character of Ethiopian philosophy is determined by the particular conditions of evolution of the Ethiopian culture. Thus, Ethiopian philosophy arises from the confluence of Greek and Patristic philosophy with traditional Ethiopian modes of thought. Because of the early isolation from its sources of Abrahamic spirituality – Byzantium and Alexandria – Ethiopia received some of its philosophical heritage through Arabic versions.

+

The sapiential literature developed under these circumstances is the result of a twofold effort of creative assimilation: on one side, of a tuning of Orthodoxy to traditional modes of thought (never eradicated), and vice versa, and, on the other side, of absorption of Greek pagan and early Patristic thought into this developing Ethiopian-Christian synthesis. As a consequence, the moral reflection of religious inspiration is prevalent, and the use of narrative, parable, apothegm and rich imagery is preferred to the use of abstract argument. This sapiential literature consists in translations and adaptations of some Greek texts, namely of the Physiolog (cca. 5th century A.D.), The Life and Maxims of Skendes (11th century A.D.) and The Book of the Wise Philosophers (1510/22).

+

In the 17th century, the religious beliefs of Ethiopians were challenged by King Suseynos' adoption of Catholicism, and by a subsequent presence of Jesuit missionaries. The attempt to forcefully impose Catholicism upon his constituents during Suseynos' reign inspired further development of Ethiopian philosophy during the 17th century. Zera Yacob (1599–1692) is the most important exponent of this renaissance. His treatise Hatata (1667) is a work often included in the narrow canon of universal philosophy.

+

17th–20th centuries

+

Early modern philosophy

+

+

+

+

+

+

+

Chronologically, the early modern era of Western philosophy is usually identified with the 17th and 18th centuries, with the 18th century often being referred to as the Enlightenment.[73] Modern philosophy is distinguished from its predecessors by its increasing independence from traditional authorities such as the Church, academia, and Aristotelianism;[74][75] a new focus on the foundations of knowledge and metaphysical system-building;[76][77] and the emergence of modern physics out of natural philosophy.[78]

+

Other central topics of philosophy in this period include the nature of the mind and its relation to the body, the implications of the new natural sciences for traditional theological topics such as free will and God, and the emergence of a secular basis for moral and political philosophy.[79] These trends first distinctively coalesce in Francis Bacon's call for a new, empirical program for expanding knowledge, and soon found massively influential form in the mechanical physics and rationalist metaphysics of René Descartes.[80]

+

Thomas Hobbes was the first to apply this methodology systematically to political philosophy and is the originator of modern political philosophy, including the modern theory of a "social contract".[81][82] The academic canon of early modern philosophy generally includes Descartes, Spinoza, Leibniz, Locke, Berkeley, Hume, and Kant,[83][84][85] though influential contributions to philosophy were made by many thinkers in this period, such as Galileo Galilei, Pierre Gassendi, Blaise Pascal, Nicolas Malebranche, Isaac Newton, Christian Wolff, Montesquieu, Pierre Bayle, Thomas Reid, Jean d'Alembert, and Adam Smith. Jean-Jacques Rousseau was a seminal figure in initiating reaction against the Enlightenment. The approximate end of the early modern period is most often identified with Immanuel Kant's systematic attempt to limit metaphysics, justify scientific knowledge, and reconcile both of these with morality and freedom.[86][87][88]

+

19th-century philosophy

+

+

+

+

+

+

+

Later modern philosophy is usually considered to begin after the philosophy of Immanuel Kant at the beginning of the 19th century.[89] German philosophy exercised broad influence in this century, owing in part to the dominance of the German university system.[90] German idealists, such as Johann Gottlieb Fichte, Georg Wilhelm Friedrich Hegel, and Friedrich Wilhelm Joseph Schelling, transformed the work of Kant by maintaining that the world is constituted by a rational or mind-like process, and as such is entirely knowable.[91] Arthur Schopenhauer's identification of this world-constituting process as an irrational will to live influenced later 19th- and early 20th-century thinking, such as the work of Friedrich Nietzsche and Sigmund Freud.

+

After Hegel's death in 1831, 19th-century philosophy largely turned against idealism in favor of varieties of philosophical naturalism, such as the positivism of Auguste Comte, the empiricism of John Stuart Mill, and the materialism of Karl Marx. Logic began a period of its most significant advances since the inception of the discipline, as increasing mathematical precision opened entire fields of inference to formalization in the work of George Boole and Gottlob Frege.[92] Other philosophers who initiated lines of thought that would continue to shape philosophy into the 20th century include:

+

+

20th-century and 21st-century philosophy

+

+

+

+

+

+

+

Within the last century, philosophy has increasingly become a professional discipline practiced within universities, like other academic disciplines. Accordingly, it has become less general and more specialized. In the view of one prominent recent historian: "Philosophy has become a highly organized discipline, done by specialists primarily for other specialists. The number of philosophers has exploded, the volume of publication has swelled, and the subfields of serious philosophical investigation have multiplied. Not only is the broad field of philosophy today far too vast to be embraced by one mind, something similar is true even of many highly specialized subfields."[93]

+

In the English-speaking world, analytic philosophy became the dominant school for much of the 20th century. In the first half of the century, it was a cohesive school, shaped strongly by logical positivism, united by the notion that philosophical problems could and should be solved by attention to logic and language. The pioneering work of Bertrand Russell was a model for the early development of analytic philosophy, moving from a rejection of the idealism dominant in late 19th-century British philosophy to an neo-Humean empiricism, strengthened by the conceptual resources of modern mathematical logic.[94][95][96]

+

In the latter half of the 20th century, analytic philosophy diffused into a wide variety of disparate philosophical views, only loosely united by historical lines of influence and a self-identified commitment to clarity and rigor. The post-war transformation of the analytic program led in two broad directions: on one hand, an interest in ordinary language as a way of avoiding or redescribing traditional philosophical problems, and on the other, a more thoroughgoing naturalism that sought to dissolve the puzzles of modern philosophy via the results of the natural sciences (such as cognitive psychology and evolutionary biology). The shift in the work of Ludwig Wittgenstein, from a view congruent with logical positivism to a therapeutic dissolution of traditional philosophy as a linguistic misunderstanding of normal forms of life, was the most influential version of the first direction in analytic philosophy.[97][98] The later work of Russell and the philosophy of Willard Van Orman Quine are influential exemplars of the naturalist approach dominant in the second half of the 20th century.[99][100][101][102] But the diversity of analytic philosophy from the 1970s onward defies easy generalization: the naturalism of Quine and his epigoni was in some precincts superseded by a "new metaphysics" of possible worlds, as in the influential work of David Lewis.[103][104] Recently, the experimental philosophy movement has sought to reappraise philosophical problems through social science research techniques.

+

On continental Europe, no single school or temperament enjoyed dominance. The flight of the logical positivists from central Europe during the 1930s and 1940s, however, diminished philosophical interest in natural science, and an emphasis on the humanities, broadly construed, figures prominently in what is usually called "continental philosophy". 20th-century movements such as phenomenology, existentialism, modern hermeneutics, critical theory, structuralism, and poststructuralism are included within this loose category. The founder of phenomenology, Edmund Husserl, sought to study consciousness as experienced from a first-person perspective,[105][106] while Martin Heidegger drew on the ideas of Kierkegaard, Nietzsche, and Husserl to propose an unconventional existential approach to ontology.[107][108]

+

In the Arabic-speaking world, Arab nationalist philosophy became the dominant school of thought, involving philosophers such as Michel Aflaq, Zaki al-Arsuzi, Salah al-Din al-Bitar of Ba'athism and Sati' al-Husri.

+

Major traditions

+

German idealism

+

+

+

+

+

+

+

Forms of idealism were prevalent in philosophy from the 18th century to the early 20th century. Transcendental idealism, advocated by Immanuel Kant, is the view that there are limits on what can be understood, since there is much that cannot be brought under the conditions of objective judgment. Kant wrote his Critique of Pure Reason (1781–1787) in an attempt to reconcile the conflicting approaches of rationalism and empiricism, and to establish a new groundwork for studying metaphysics. Kant's intention with this work was to look at what we know and then consider what must be true about it, as a logical consequence of the way we know it. One major theme was that there are fundamental features of reality that escape our direct knowledge because of the natural limits of the human faculties.[109] Although Kant held that objective knowledge of the world required the mind to impose a conceptual or categorical framework on the stream of pure sensory data—a framework including space and time themselves—he maintained that things-in-themselves existed independently of our perceptions and judgments; he was therefore not an idealist in any simple sense. Kant's account of things-in-themselves is both controversial and highly complex. Continuing his work, Johann Gottlieb Fichte and Friedrich Schelling dispensed with belief in the independent existence of the world, and created a thoroughgoing idealist philosophy.

+

The most notable work of this German idealism was G. W. F. Hegel's Phenomenology of Spirit, of 1807. Hegel admitted his ideas were not new, but that all the previous philosophies had been incomplete. His goal was to correctly finish their job. Hegel asserts that the twin aims of philosophy are to account for the contradictions apparent in human experience (which arise, for instance, out of the supposed contradictions between "being" and "not being"), and also simultaneously to resolve and preserve these contradictions by showing their compatibility at a higher level of examination ("being" and "not being" are resolved with "becoming"). This program of acceptance and reconciliation of contradictions is known as the "Hegelian dialectic". Philosophers influenced by Hegel include Ludwig Andreas Feuerbach, who coined the term projection as pertaining to our inability to recognize anything in the external world without projecting qualities of ourselves upon those things; Karl Marx; Friedrich Engels; and the British idealists, notably T. H. Green, J. M. E. McTaggart and F. H. Bradley.

+

Few 20th-century philosophers have embraced idealism. However, quite a few have embraced Hegelian dialectic. Immanuel Kant's "Copernican Turn" also remains an important philosophical concept today.

+

Pragmatism

+

+

+

+

+

+

+

Pragmatism was founded in the spirit of finding a scientific concept of truth that does not depend on personal insight (revelation) or reference to some metaphysical realm. The meaning or purport of a statement should be judged by the effect its acceptance would have on practice. Truth is that opinion which inquiry taken far enough would ultimately reach.[110] For Charles Sanders Peirce these were principles of the inquirer's self-regulation, implied by the idea and hope that inquiry is not generally fruitless. The details of how these principles should be interpreted have been subject to discussion since Peirce first conceived them. Peirce's maxim of pragmatism is as follows: "Consider what effects, which might conceivably have practical bearings, we conceive the object of our conception to have. Then, our conception of these effects is the whole of our conception of the object."[111] Like postmodern neo-pragmatist Richard Rorty, many are convinced that pragmatism asserts that the truth of beliefs does not consist in their correspondence with reality, but in their usefulness and efficacy.[112]

+

The late 19th-century American philosophers Charles Sanders Peirce and William James were its co-founders, and it was later developed by John Dewey as instrumentalism. Since the usefulness of any belief at any time might be contingent on circumstance, Peirce and James conceptualised final truth as something only established by the future, final settlement of all opinion.[113] Critics have accused pragmatism falling victim to a simple fallacy: because something that is true proves useful, that usefulness is the basis for its truth.[114] Thinkers in the pragmatist tradition have included John Dewey, George Santayana, Quine and C. I. Lewis. Pragmatism has more recently been taken in new directions by Richard Rorty, John Lachs, Donald Davidson, Susan Haack, and Hilary Putnam.

+

Phenomenology

+

+

+

+

+

+

+

Edmund Husserl's phenomenology was an ambitious attempt to lay the foundations for an account of the structure of conscious experience in general.[115] An important part of Husserl's phenomenological project was to show that all conscious acts are directed at or about objective content, a feature that Husserl called intentionality.[116]

+

In the first part of his two-volume work, the Logical Investigations (1901), he launched an extended attack on psychologism. In the second part, he began to develop the technique of descriptive phenomenology, with the aim of showing how objective judgments are grounded in conscious experience—not, however, in the first-person experience of particular individuals, but in the properties essential to any experiences of the kind in question.[115]

+

He also attempted to identify the essential properties of any act of meaning. He developed the method further in Ideas (1913) as transcendental phenomenology, proposing to ground actual experience, and thus all fields of human knowledge, in the structure of consciousness of an ideal, or transcendental, ego. Later, he attempted to reconcile his transcendental standpoint with an acknowledgement of the intersubjective life-world in which real individual subjects interact. Husserl published only a few works in his lifetime, which treat phenomenology mainly in abstract methodological terms; but he left an enormous quantity of unpublished concrete analyses.

+

Husserl's work was immediately influential in Germany, with the foundation of phenomenological schools in Munich and Göttingen. Phenomenology later achieved international fame through the work of such philosophers as Martin Heidegger (formerly Husserl's research assistant), Maurice Merleau-Ponty, and Jean-Paul Sartre. Through the work of Heidegger and Sartre, Husserl's focus on subjective experience influenced aspects of existentialism.

+

Existentialism

+

Main article:

Existentialism

+

Existentialism is a term applied to the work of a number of late 19th- and 20th-century philosophers who, despite profound doctrinal differences,[117][118] shared the belief that philosophical thinking begins with the human subject—not merely the thinking subject, but the acting, feeling, living human individual.[119] In existentialism, the individual's starting point is characterized by what has been called "the existential attitude", or a sense of disorientation and confusion in the face of an apparently meaningless or absurd world.[120] Many existentialists have also regarded traditional systematic or academic philosophy, in both style and content, as too abstract and remote from concrete human experience.[121][122]

+

+

+

+

+

+

Although they did not use the term, the 19th-century philosophers Søren Kierkegaard and Friedrich Nietzsche are widely regarded as the fathers of existentialism. Their influence, however, has extended beyond existentialist thought.[123][124][125]

+

The main target of Kierkegaard's writings was the idealist philosophical system of Hegel which, he thought, ignored or excluded the inner subjective life of living human beings. Kierkegaard, conversely, held that "truth is subjectivity", arguing that what is most important to an actual human being are questions dealing with an individual's inner relationship to existence. In particular, Kierkegaard, a Christian, believed that the truth of religious faith was a subjective question, and one to be wrestled with passionately.[126][127]

+

Although Kierkegaard and Nietzsche were among his influences, the extent to which the German philosopher Martin Heidegger should be considered an existentialist is debatable. In Being and Time he presented a method of rooting philosophical explanations in human existence (Dasein) to be analysed in terms of existential categories (existentiale); and this has led many commentators to treat him as an important figure in the existentialist movement. However, in The Letter on Humanism, Heidegger explicitly rejected the existentialism of Jean-Paul Sartre.

+

Sartre became the best-known proponent of existentialism, exploring it not only in theoretical works such as Being and Nothingness, but also in plays and novels. Sartre, along with Simone de Beauvoir, represented an avowedly atheistic branch of existentialism, which is now more closely associated with their ideas of nausea, contingency, bad faith, and the absurd than with Kierkegaard's spiritual angst. Nevertheless, the focus on the individual human being, responsible before the universe for the authenticity of his or her existence, is common to all these thinkers.

+

Structuralism and post-structuralism

+

+

+

+

+

+

+

Inaugurated by the linguist Ferdinand de Saussure, structuralism sought to clarify systems of signs through analyzing the discourses they both limit and make possible. Saussure conceived of the sign as being delimited by all the other signs in the system, and ideas as being incapable of existence prior to linguistic structure, which articulates thought. This led continental thought away from humanism, and toward what was termed the decentering of man: language is no longer spoken by man to express a true inner self, but language speaks man.

+

Structuralism sought the province of a hard science, but its positivism soon came under fire by poststructuralism, a wide field of thinkers, some of whom were once themselves structuralists, but later came to criticize it. Structuralists believed they could analyze systems from an external, objective standing, for example, but the poststructuralists argued that this is incorrect, that one cannot transcend structures and thus analysis is itself determined by what it examines, while the distinction between the signifier and signified was treated as crystalline by structuralists, poststructuralists asserted that every attempt to grasp the signified results in more signifiers, so meaning is always in a state of being deferred, making an ultimate interpretation impossible.

+

Structuralism came to dominate continental philosophy throughout the 1960s and early 1970s, encompassing thinkers as diverse as Claude Lévi-Strauss, Roland Barthes and Jacques Lacan. Post-structuralism came to predominate over the 1970s onwards, including thinkers such as Michel Foucault, Jacques Derrida, Gilles Deleuze and even Roland Barthes; it incorporated a critique of structuralism's limitations.

+

Thomism

+

+

+

+

+

+

+

+

Largely Aristotelian in its approach and content, Thomism is a philosophical tradition that follows the writings of Thomas Aquinas. His work has been read, studied, and disputed since the 13th century, especially by Roman Catholics. However, Aquinas has enjoyed a revived interest since the late 19th century, among both atheists (like Philippa Foot) and theists (like Elizabeth Anscombe).[128]

+

Thomist philosophers tend to be rationalists in epistemology, as well as metaphysical realists, and virtue ethicists. Human beings are rational animals whose good can be known by reason and pursued by the will. With regard to the soul, Thomists (like Aristotle) argue that soul or psyche is real and immaterial but inseparable from matter in organisms. Soul is the form of the body. Thomists accept all four of Aristotle's causes as natural, including teleological or final causes. In this way, though Aquinas argued that whatever is in the intellect begins in the senses, natural teleology can be discerned with the senses and abstracted from nature through induction.[129]

+

Contemporary Thomism contains a diversity of philosophical styles, from Neo-Scholasticism to Existential Thomism.[130] The so-called new natural lawyers like Germain Grisez and Robert P. George have applied Thomistic legal principles to contemporary ethical debates, while cognitive neuroscientist Walter Freeman proposes that Thomism is the philosophical system explaining cognition that is most compatible with neurodynamics, in a 2008 article in the journal Mind and Matter entitled "Nonlinear Brain Dynamics and Intention According to Aquinas." So-called Analytical Thomism of John Haldane and others encourages dialogue between analytic philosophy and broadly Aristotelian philosophy of mind, psychology, and hylomorphic metaphysics.[131] Other modern or contemporary Thomists include Eleonore Stump, Alasdair MacIntyre, and John Finnis.

+

The analytic tradition

+

+

+

+

+

+

+

The term analytic philosophy roughly designates a group of philosophical methods that stress detailed argumentation, attention to semantics, use of classical logic and non-classical logics and clarity of meaning above all other criteria. Some have held that philosophical problems arise through misuse of language or because of misunderstandings of the logic of our language, while some maintain that there are genuine philosophical problems and that philosophy is continuous with science. Michael Dummett in his Origins of Analytical Philosophy makes the case for counting Gottlob Frege's The Foundations of Arithmetic as the first analytic work, on the grounds that in that book Frege took the linguistic turn, analyzing philosophical problems through language. Bertrand Russell and G.E. Moore are also often counted as founders of analytic philosophy, beginning with their rejection of British idealism, their defense of realism and the emphasis they laid on the legitimacy of analysis.

+

Russell's classic works The Principles of Mathematics,[132] On Denoting and Principia Mathematica with Alfred North Whitehead, aside from greatly promoting the use of mathematical logic in philosophy, set the ground for much of the research program in the early stages of the analytic tradition, emphasizing such problems as: the reference of proper names, whether 'existence' is a property, the nature of propositions, the analysis of definite descriptions, the discussions on the foundations of mathematics; as well as exploring issues of ontological commitment and even metaphysical problems regarding time, the nature of matter, mind, persistence and change, which Russell tackled often with the aid of mathematical logic. Russell and Moore's philosophy, in the beginning of the 20th century, developed as a critique of Hegel and his British followers in particular, and of grand systems of speculative philosophy in general, though by no means all analytic philosophers reject the philosophy of Hegel (see Charles Taylor) nor speculative philosophy. Some schools in the group include logical positivism, and ordinary language both markedly influenced by Russell and Wittgenstein's development of Logical Atomism the former positively and the latter negatively.

+

In 1921, Ludwig Wittgenstein, who studied under Russell at Cambridge, published his Tractatus Logico-Philosophicus, which gave a rigidly "logical" account of linguistic and philosophical issues. At the time, he understood most of the problems of philosophy as mere puzzles of language, which could be solved by investigating and then minding the logical structure of language. Years later, he reversed a number of the positions he set out in the Tractatus, in for example his second major work, Philosophical Investigations (1953). Investigations was influential in the development of "ordinary language philosophy," which was promoted by Gilbert Ryle, J.L. Austin, and a few others.

+

In the United States, meanwhile, the philosophy of Quine was having a major influence, with the paper Two Dogmas of Empiricism. In that paper Quine criticizes the distinction between analytic and synthetic statements, arguing that a clear conception of analyticity is unattainable. He argued for holism, the thesis that language, including scientific language, is a set of interconnected sentences, none of which can be verified on its own, rather, the sentences in the language depend on each other for their meaning and truth conditions. A consequence of Quine's approach is that language as a whole has only a thin relation to experience. Some sentences that refer directly to experience might be modified by sense impressions, but as the whole of language is theory-laden, for the whole language to be modified, more than this is required. However, most of the linguistic structure can in principle be revised, even logic, in order to better model the world.

+

+

+

+

+

+

Notable students of Quine include Donald Davidson and Daniel Dennett. The former devised a program for giving a semantics to natural language and thereby answer the philosophical conundrum "what is meaning?". A crucial part of the program was the use of Alfred Tarski's semantic theory of truth. Dummett, among others, argued that truth conditions should be dispensed with in the theory of meaning, and replaced by assertability conditions. Some propositions, on this view, are neither true nor false, and thus such a theory of meaning entails a rejection of the law of the excluded middle. This, for Dummett, entails antirealism, as Russell himself pointed out in his An Inquiry into Meaning and Truth.

+

By the 1970s there was a renewed interest in many traditional philosophical problems by the younger generations of analytic philosophers. David Lewis, Saul Kripke, Derek Parfit and others took an interest in traditional metaphysical problems, which they began exploring by the use of logic and philosophy of language. Among those problems some distinguished ones were: free will, essentialism, the nature of personal identity, identity over time, the nature of the mind, the nature of causal laws, space-time, the properties of material beings, modality, etc. In those universities where analytic philosophy has spread, these problems are still being discussed passionately. Analytic philosophers are also interested in the methodology of analytic philosophy itself, with Timothy Williamson, Wykeham Professor of Logic at Oxford, publishing recently a book entitled The Philosophy of Philosophy. Some influential figures in contemporary analytic philosophy are: Timothy Williamson, David Lewis, John Searle, Thomas Nagel, Hilary Putnam, Michael Dummett, Peter van Inwagen, Saul Kripke and Patricia Churchland. Analytic philosophy has sometimes been accused of not contributing to the political debate or to traditional questions in aesthetics. However, with the appearance of A Theory of Justice by John Rawls and Anarchy, State and Utopia by Robert Nozick, analytic political philosophy acquired respectability. Analytic philosophers have also shown depth in their investigations of aesthetics, with Roger Scruton, Nelson Goodman, Arthur Danto and others developing the subject to its current shape.

+

Applied philosophy

+

+

+

+

+

+

The ideas conceived by a society have profound repercussions on what actions the society performs. As Richard Weaver has argued, "ideas have consequences". The study of philosophy yields applications such as those in ethics—applied ethics in particular—and political philosophy. The political and economic philosophies of Confucius, Sun Zi, Chanakya, Ibn Khaldun, Ibn Rushd, Ibn Taimiyyah, Niccolò Machiavelli, Gottfried Wilhelm Leibniz, John Locke, Jean-Jacques Rousseau, Adam Smith, Karl Marx, John Stuart Mill, Leo Tolstoy, Mahatma Gandhi, Martin Luther King Jr., and others—all of these have been used to shape and justify governments and their actions.

+

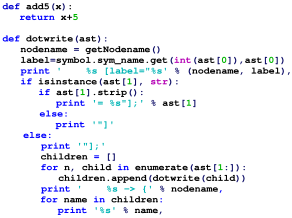

In the field of philosophy of education, progressive education as championed by John Dewey has had a profound impact on educational practices in the United States in the 20th century. Descendants of this movement include the current efforts in philosophy for children, which are part of philosophy education. Carl von Clausewitz's political philosophy of war has had a profound effect on statecraft, international politics, and military strategy in the 20th century, especially in the years around World War II. Logic has become crucially important in mathematics, linguistics, psychology, computer science, and computer engineering.

+

Other important applications can be found in epistemology, which aid in understanding the requisites for knowledge, sound evidence, and justified belief (important in law, economics, decision theory, and a number of other disciplines). The philosophy of science discusses the underpinnings of the scientific method and has affected the nature of scientific investigation and argumentation. As such, philosophy has fundamental implications for science as a whole. For example, the strictly empirical approach of Skinner's behaviorism affected for decades the approach of the American psychological establishment. Deep ecology and animal rights examine the moral situation of humans as occupants of a world that has non-human occupants to consider also. Aesthetics can help to interpret discussions of music, literature, the plastic arts, and the whole artistic dimension of life. In general, the various philosophies strive to provide practical activities with a deeper understanding of the theoretical or conceptual underpinnings of their fields.

+

Often philosophy is seen as an investigation into an area not sufficiently well understood to be its own branch of knowledge. For example, what were once philosophical pursuits have evolved into the modern day fields such as psychology, sociology, linguistics, and economics.

+

See also

+

+

+

+

+

References

+

+

+- ^ Jenny Teichmann and Katherine C. Evans, Philosophy: A Beginner's Guide (Blackwell Publishing, 1999), p. 1: "Philosophy is a study of problems which are ultimate, abstract and very general. These problems are concerned with the nature of existence, knowledge, morality, reason and human purpose."

+- ^ A.C. Grayling (1999). "Editor's Introduction". In A.C. Grayling, ed. Philosophy 1: A Guide through the Subject. vol. 1. Oxford University Press. p. 1. ISBN 978-0-19-875243-1.

The aim of philosophical inquiry is to gain insight into questions about knowledge, truth, reason, reality, meaning, mind, and value. Other human endeavors explore aspects of these same questions, not least art and literature, but it is philosophy that mounts a direct assault upon them...

+- ^ Definition of "philosophy, n.". Oxford English Dictionary Online. June 2015. Oxford University Press. http://www.oed.com/view/Entry/142505?rskey=uk0M8u&result=1 (accessed August 05, 2015): "7. The study of the fundamental nature of knowledge, reality, and existence, and the basis and limits of human understanding; this considered as an academic discipline. (Now the usual sense.)

+- ^ See Diogenes Laertius: "Lives of Eminent Philosophers", I, 12; Cicero: "Tusculanae disputationes", V, 8–9

+- ^ φιλοσοφία. Liddell, Henry George; Scott, Robert; A Greek–English Lexicon at the Perseus Project

+- ^ "Online Etymology Dictionary". Etymonline.com. Retrieved 22 August 2010.

+- ^ The definition of philosophy is: "1. orig., love of, or the search for, wisdom or knowledge 2. theory or logical analysis of the principles underlying conduct, thought, knowledge, and the nature of the universe". Webster's New World Dictionary (Second College ed.).

+- ^ Strong's Greek Dictionary 5385, http://biblehub.com/greek/5385.htm

+- ^ a b "Home : Oxford English Dictionary". oed.com.

+- ^ Anthony Quinton (1995). "The ethics of philosophical practice". In T. Honderich, ed. The Oxford Companion to Philosophy. Oxford University Press. p. 666. ISBN 978-0-19-866132-0.

Philosophy is rationally critical thinking, of a more or less systematic kind about the general nature of the world (metaphysics or theory of existence), the justification of belief (epistemology or theory of knowledge), and the conduct of life (ethics or theory of value). Each of the three elements in this list has a non-philosophical counterpart, from which it is distinguished by its explicitly rational and critical way of proceeding and by its systematic nature. Everyone has some general conception of the nature of the world in which they live and of their place in it. Metaphysics replaces the unargued assumptions embodied in such a conception with a rational and organized body of beliefs about the world as a whole. Everyone has occasion to doubt and question beliefs, their own or those of others, with more or less success and without any theory of what they are doing. Epistemology seeks by argument to make explicit the rules of correct belief formation. Everyone governs their conduct by directing it to desired or valued ends. Ethics, or moral philosophy, in its most inclusive sense, seeks to articulate, in rationally systematic form, the rules or principles involved.