Pseudo-random number generators (PRNGs) like Mersenne Twister and Python's random are recursive:

xₙ₊₁ = (a·xₙ + c) mod m

This creates:

- 🔁 Hidden correlations — each number depends on the one before

- 📅 Periodicity — sequences eventually repeat

- 🧱 Exploration boundaries — AI can't truly explore

- 🎭 False reproducibility — same seed = same path

AI deserves better.

import aleam as al

rng = al.Aleam()

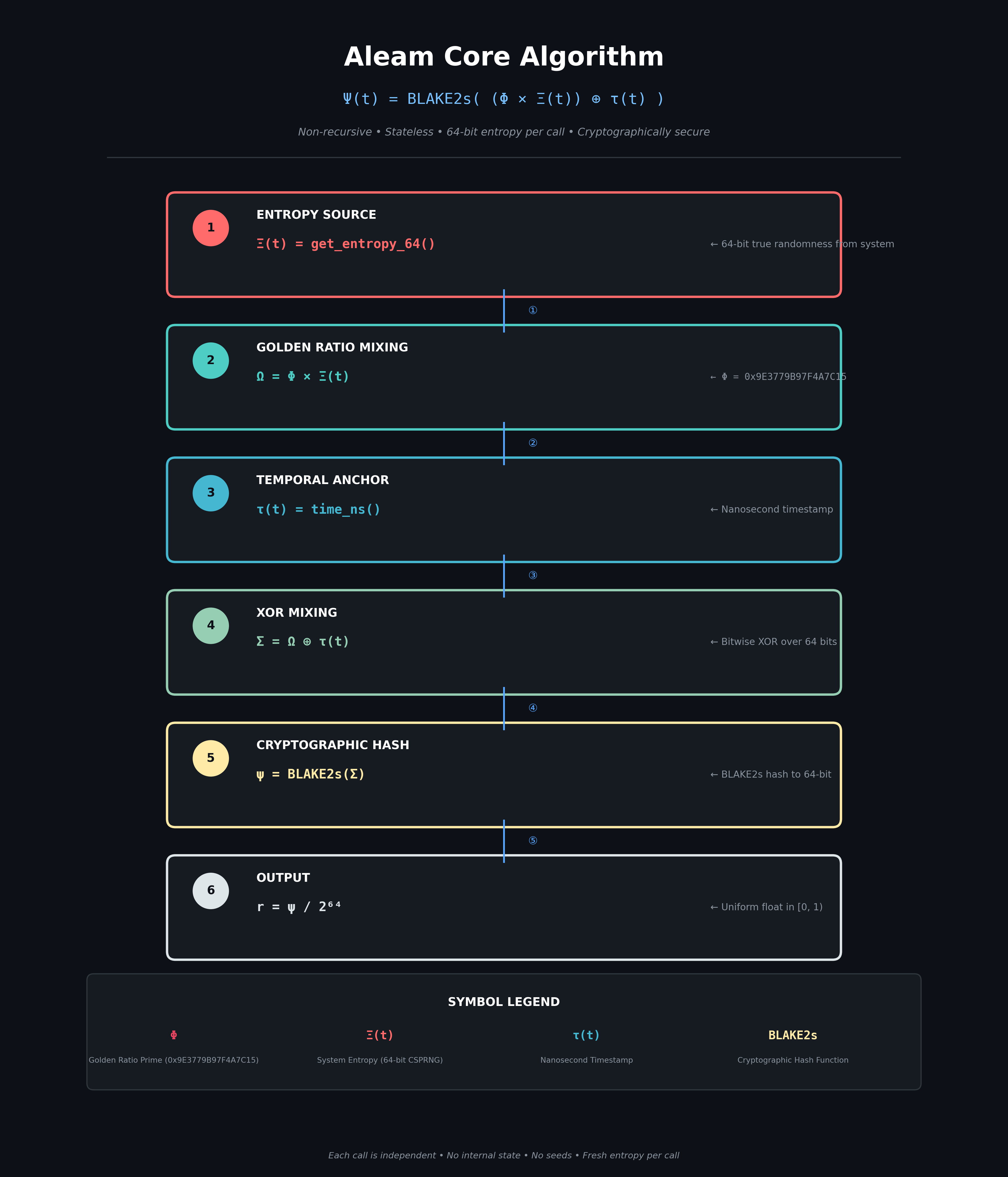

x = rng.random() # True randomness. No recursion. No state.Aleam implements the proven equation:

Ψ(t) = BLAKE2s( (Φ × Ξ(t)) ⊕ τ(t) )

| Symbol | Meaning |

|---|---|

| Φ | Golden ratio prime (0x9E3779B97F4A7C15) |

| Ξ(t) | 64-bit true entropy from system CSPRNG |

| τ(t) | Nanosecond timestamp |

| ⊕ | XOR mixing |

| BLAKE2s | Cryptographic hash |

Properties:

| 🔄 Non-recursive | 🎲 Stateless | 🔒 Cryptographically Secure | 🧠 AI-Optimized |

|---|---|---|---|

| Each call independent | No seeds, no state | Powered by BLAKE2s | Gradient noise, latent sampling |

| Step | Operation | Description |

|---|---|---|

| 1 | Ξ(t) = get_entropy_64() |

Pull 64-bit true entropy from system |

| 2 | Ω = Φ × Ξ(t) |

Golden ratio mixing (bijective, maximally equidistributed) |

| 3 | τ = time.time_ns() |

Nanosecond timestamp for uniqueness |

| 4 | Σ = Ω ⊕ τ |

XOR mixing over 64 bits |

| 5 | ψ = BLAKE2s(Σ) |

Cryptographic hash to 64-bit output |

| 6 | r = ψ / 2⁶⁴ |

Map to floating point [0, 1) |

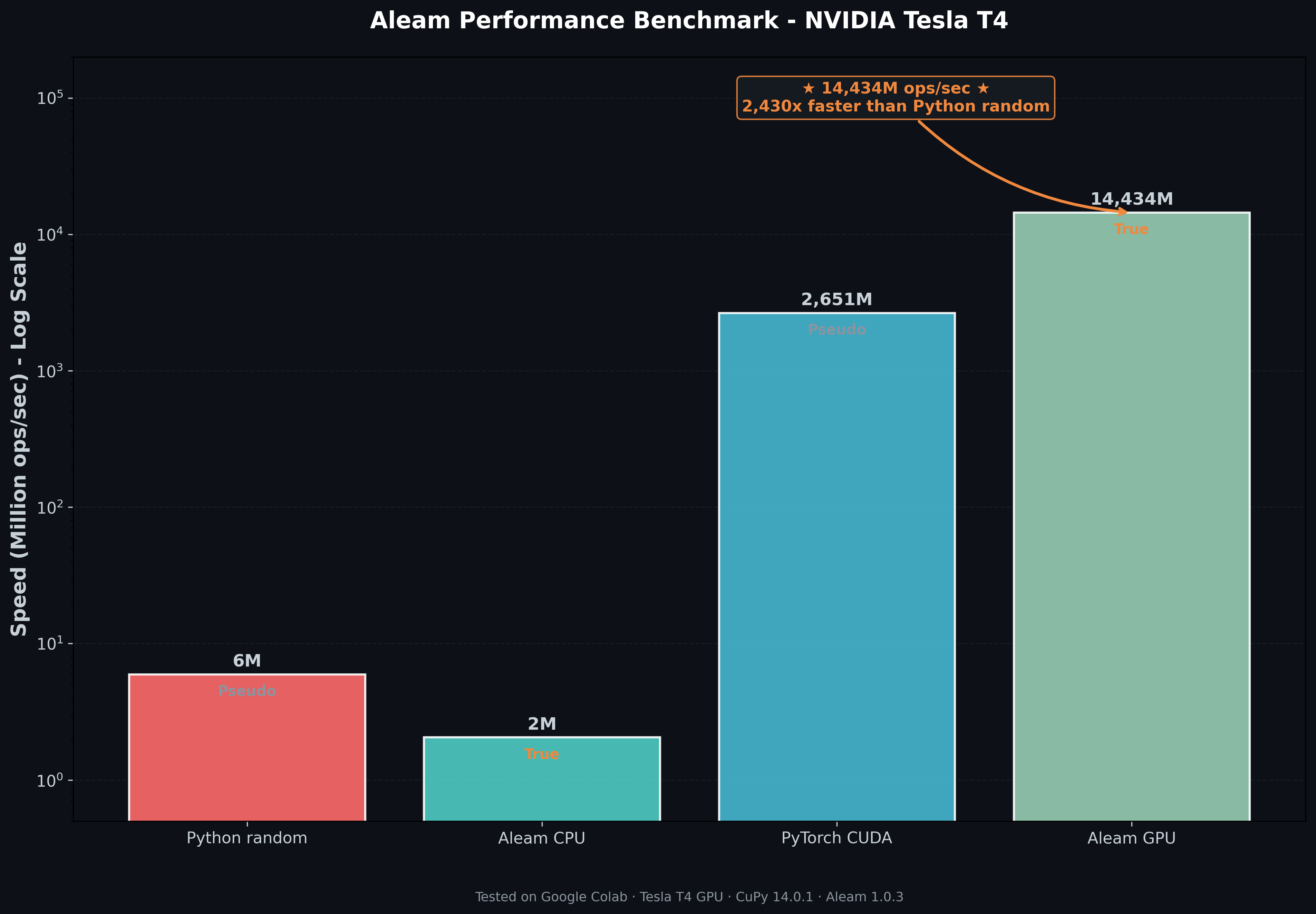

| Generator | Speed (M ops/sec) | Randomness Type |

|---|---|---|

| Python random | 5.94 | Pseudo |

| Aleam CPU | 2.05 | True |

| PyTorch CUDA | 2,650.81 | Pseudo |

| Aleam GPU | 14,434.25 | True |

Tested on NVIDIA Tesla T4 (Google Colab) · CuPy 14.0.1 · Aleam 1.0.3

💡 Key Insight: Aleam GPU delivers 14.4 BILLION true random numbers per second — 2,430x faster than Python random and 5.4x faster than PyTorch CUDA!

After 2.55 million samples, Aleam passed all 10 rigorous tests:

| Test | Result | Status |

|---|---|---|

| Mean | 0.499578 | ✓ |

| Variance | 0.083154 | ✓ |

| Chi-Square (Uniformity) | 21.40 (critical 30.14) | ✓ PASS |

| Max Autocorrelation | 0.0094 | ✓ EXCELLENT |

| π Estimation Error | 0.0105% | ✓ EXCELLENT |

| Shannon Entropy | 0.9999 | ✓ NEAR-PERFECT |

"True randomness is not a bug — it's a feature."

pip install aleamimport aleam as al

# Create a true random generator

rng = al.Aleam()

# Core randomness

x = rng.random() # 0.90324326

u64 = rng.random_uint64() # 12345678901234567890

y = rng.randint(1, 100) # 86

z = rng.choice(['AI', 'ML', 'Aleam']) # 'ML'

u = rng.uniform(5.0, 10.0) # 7.234

n = rng.gauss(0.0, 1.0) # -0.432

# Sampling (requires list, not range)

population = list(range(10000))

batch = rng.sample(population, 64) # Random 64 unique indices

# Shuffle list in-place

items = [1, 2, 3, 4, 5]

rng.shuffle(items) # [3, 1, 5, 2, 4]

# Random bytes (returns list of integers)

key = rng.random_bytes(32) # 32 random bytes as list| Method | Description | Example |

|---|---|---|

random() |

True random float in [0, 1) | rng.random() |

random_uint64() |

True random 64-bit integer | rng.random_uint64() |

randint(a, b) |

Random integer in [a, b] | rng.randint(1, 100) |

choice(seq) |

Random element from sequence | rng.choice(['a', 'b', 'c']) |

shuffle(lst) |

Shuffle list in-place | rng.shuffle(my_list) |

sample(pop, k) |

Sample k unique elements | rng.sample(list(range(100)), 10) |

random_bytes(n) |

Generate n random bytes (as list) | rng.random_bytes(32) |

All distributions are available as methods on the Aleam instance:

| Distribution | Method | Example |

|---|---|---|

| Uniform | uniform(low, high) |

rng.uniform(5, 10) |

| Normal (Gaussian) | gauss(mu, sigma) |

rng.gauss(0, 1) |

| Exponential | exponential(rate) |

rng.exponential(1.0) |

| Beta | beta(alpha, beta) |

rng.beta(2, 5) |

| Gamma | gamma(shape, scale) |

rng.gamma(2, 1) |

| Poisson | poisson(lam) |

rng.poisson(3.5) |

| Laplace | laplace(loc, scale) |

rng.laplace(0, 1) |

| Logistic | logistic(loc, scale) |

rng.logistic(0, 1) |

| Log-Normal | lognormal(mu, sigma) |

rng.lognormal(0, 1) |

| Weibull | weibull(shape, scale) |

rng.weibull(1.5, 1) |

| Pareto | pareto(alpha, scale) |

rng.pareto(2, 1) |

| Chi-square | chi_square(df) |

rng.chi_square(5) |

| Student's t | student_t(df) |

rng.student_t(3) |

| F-distribution | f_distribution(df1, df2) |

rng.f_distribution(5, 10) |

| Dirichlet | dirichlet(alpha) |

rng.dirichlet([1, 2, 3]) |

| Class | Methods | Use Case |

|---|---|---|

AIRandom |

gradient_noise(), latent_vector(), dropout_mask(), augmentation_params(), mini_batch(), exploration_noise() |

Training, augmentation, RL exploration |

GradientNoise |

add_noise(), reset(), current_scale() |

Gradient noise injection with decay |

LatentSampler |

sample(), sample_one(), interpolate() |

Latent space sampling for VAEs/GANs |

Module-level functions that return numpy arrays directly:

| Function | Description | Example |

|---|---|---|

random_array(shape) |

Uniform random array | al.random_array((100, 100)) |

randn_array(shape, mu, sigma) |

Normal random array | al.randn_array((1000,), 0, 1) |

randint_array(shape, low, high) |

Integer random array | al.randint_array((50,), 0, 10) |

Aleam provides true randomness to ML frameworks via true random seeds.

import torch

import aleam as al

# Get true random seed from Aleam

rng = al.Aleam()

seed = rng.random_uint64()

# Set PyTorch seed

torch.manual_seed(seed)

# Generate tensors on GPU

tensor = torch.randn(100, 100, device='cuda')import tensorflow as tf

import aleam as al

# Get true random seed from Aleam

rng = al.Aleam()

seed = rng.random_uint64()

# Set TensorFlow seed

tf.random.set_seed(seed)

# Generate tensors

tensor = tf.random.normal((100, 100))import jax

import aleam as al

# Get true random seed from Aleam

rng = al.Aleam()

seed = rng.random_uint64()

# Create JAX key

key = jax.random.key(seed)

# Generate tensors

tensor = jax.random.normal(key, (100, 100))import cupy as cp

import aleam as al

# Get true random seed from Aleam

rng = al.Aleam()

seed = rng.random_uint64()

# Set CuPy seed

cp.random.seed(seed)

# Generate 100 million true random numbers on GPU

arr = cp.random.randn(10000, 10000) # 14.4B ops/sec!pip install aleam# PyTorch

pip install aleam[torch]

# TensorFlow

pip install aleam[tensorflow]

# CuPy (for GPU acceleration)

pip install aleam[cupy]

# All frameworks

pip install aleam[all]git clone https://github.com/fardinsabid/aleam.git

cd aleam

pip install .aleam/

│

├── .github/

│ └── workflows/

│ ├── tests.yml

│ ├── publish.yml

│ ├── security.yml

│ └── docs.yml

│

├── aleam/

│ │

│ ├── __init__.py

│ └── py.typed

│

├── src/

│ │

│ └── aleam/

│ │

│ ├── bindings/

│ │ ├── module.cpp

│ │ └── exports.h

│ │

│ ├── core/

│ │ ├── aleam_core.h

│ │ ├── aleam_core.cpp

│ │ ├── constants.h

│ │ └── utils.h

│ │

│ ├── entropy/

│ │ ├── entropy.h

│ │ ├── entropy_linux.h

│ │ ├── entropy_windows.h

│ │ └── entropy_darwin.h

│ │

│ ├── hash/

│ │ ├── blake2s.h

│ │ └── blake2s_config.h

│ │

│ ├── distributions/

│ │ ├── distributions.h

│ │ ├── distributions.cpp

│ │ ├── normal.h

│ │ ├── exponential.h

│ │ ├── beta.h

│ │ ├── gamma.h

│ │ ├── poisson.h

│ │ ├── laplace.h

│ │ ├── logistic.h

│ │ ├── lognormal.h

│ │ ├── weibull.h

│ │ ├── pareto.h

│ │ ├── chi_square.h

│ │ ├── student_t.h

│ │ ├── f_distribution.h

│ │ └── dirichlet.h

│ │

│ ├── arrays/

│ │ ├── arrays.h

│ │ ├── arrays.cpp

│ │ └── array_utils.h

│ │

│ ├── ai/

│ │ ├── ai.h

│ │ ├── ai.cpp

│ │ ├── gradient_noise.h

│ │ ├── latent_sampler.h

│ │ └── augmentation.h

│ │

│ ├── integrations/

│ │ ├── integrations.h

│ │ ├── integrations.cpp

│ │ ├── torch_integration.h

│ │ ├── torch_integration.cpp

│ │ ├── tensorflow_integration.h

│ │ ├── tensorflow_integration.cpp

│ │ ├── jax_integration.h

│ │ ├── jax_integration.cpp

│ │ ├── cupy_integration.h

│ │ ├── cupy_integration.cpp

│ │ ├── pandas_integration.h

│ │ ├── pandas_integration.cpp

│ │ ├── polars_integration.h

│ │ ├── polars_integration.cpp

│ │ ├── xarray_integration.h

│ │ ├── xarray_integration.cpp

│ │ ├── pymc_integration.h

│ │ ├── pymc_integration.cpp

│ │ ├── dask_integration.h

│ │ └── dask_integration.cpp

│ │

│ └── cuda/

│ ├── cuda_kernels.h

│ ├── cuda_kernels.cu

│ ├── cuda_uniform.cu

│ ├── cuda_normal.cu

│ └── cuda_utils.h

│

├── include/

│ └── aleam/

│ └── aleam.h

│

├── tests/

│ ├── test_core.py

│ ├── test_ai.py

│ └── test_statistical.py

│

├── benchmarks/

│ └── benchmark_core.py

│

├── assets/

│ └── images/

│ ├── benchmarks/

│ │ └── cpu_vs_gpu.png

│ └── diagrams/

│ └── algorithm.png

│

│

├── examples/

│ ├── basic_usage.py

│ ├── ai_ml_features.py

│ ├── array_operations.py

│ ├── distributions.py

│ ├── monte_carlo_pi.py

│ ├── reinforcement_learning.py

│ ├── cuda_integration.py

│ ├── pytorch_integration.py

│ └── tensorflow_integration.py

│

├── docs/

│ ├── ALEAM_RESEARCH_PAPER.md

│ ├── CHANGELOG.md

│ ├── index.md

│ ├── INSTALLATION.md

│ └── ROADMAP.md

│

├── setup.py

├── pyproject.toml

├── MANIFEST.in

├── requirements.txt

├── requirements-dev.txt

├── LICENSE

├── README.md

├── SECURITY.md

├── CONTRIBUTING.md

├── CODE_OF_CONDUCT.md

└── .gitignore

A: True randomness is slower than pseudo-random — that's expected. You're trading speed for genuine entropy. On GPU, Aleam achieves 14.4B ops/sec, far exceeding CPU pseudo-random speeds.

A: No. Aleam is stateless by design. Use Python's random module if you need reproducibility.

A: Yes. Each call consumes 64 bits of true entropy and passes through BLAKE2s.

A: Yes! Use CuPy with true random seeds from Aleam:

import cupy as cp

import aleam as al

seed = al.Aleam().random_uint64()

cp.random.seed(seed)

arr = cp.random.randn(10000, 10000) # 14.4B ops/secA: The C++ bindings accept Python lists directly. Use list(range(10000)) instead of range(10000).

A: It returns a Python list of integers (0-255), not a bytes object.

- ✅ Use for AI research, exploration, and creative projects

- ✅ Use for scientific simulations requiring true randomness

- ✅ Use for cryptographic applications

- ❌ Do not use for security-critical systems without additional entropy sources

MIT License — see LICENSE for details.

| 📦 PyPI | pypi.org/project/aleam |

| 🐛 Issues | GitHub Issues |

| 📖 Documentation | GitHub Docs |

| 📄 Research Paper | ALEAM_RESEARCH_PAPER.md |